Intel Hd Graphics Vs Amd Radeon

AMD vs Intel Integrated Graphics: Tin can't We Get Any Faster?

Who makes the best integrated graphics solution, AMD or Intel? The reply is simple right now if you check our GPU benchmarks hierarchy: AMD wins, easily, at to the lowest degree on the desktop. Current Ryzen APUs with Vega 11 Graphics are near 2.5 times faster than Intel's UHD Graphics 630. Of course, fifty-fifty the fastest integrated solutions pale in comparing to a defended GPU, and they're not on our list of the best graphics cards for a good reason. However, a lot of changes are coming this year, sooner than subsequently.

Update: Nosotros've added Intel's Gen11 Graphics using an Ice Lake Core i7-1065G7 processor. Cheers to Razer loaning us a Razer Blade Stealth xiii, and HP chiming in with an Envy 17t, nosotros were able to examination Intel Gen11 Graphics with both a 15W (default) and 25W (Razer) TDP. We've also added GTX 1050 results running on a Ryzen 5 3400G, which limits performance a bit at 720p and minimum quality. We take not fully updated the text, as we'll take a dissever article looking specifically at Gen11 Graphics operation.

Judging by all the leaks, AMD'due south Renoir desktop APUs should show upwards very before long. Meanwhile, AMD's RNDA ii architecture is coming (and should eventually finish up in an APU), and Intel's Tiger Lake with Xe Graphics should also arrive this summer. Unfortunately, as the maxim goes, the more things change…

To give us a clear picture of where we are and where we've come from, specifically in regards to integrated graphics solutions, we've run updated benchmarks using our standard GPU examination suite—with a few modifications. We're using the aforementioned ix games (Borderlands iii, The Division two, Far Cry 5, Final Fantasy XIV, Forza Horizon 4, Metro Exodus, Ruby-red Dead Redemption 2, Shadow of the Tomb Raider, and Strange Brigade), only we're running at 720p (no resolution scaling) and minimum fidelity settings.

Some of these games are withal relatively demanding, even at 720p, merely all have been available for at least half-dozen months, which is plenty of time to fix whatsoever driver issues—assuming they could be fixed. We intend to encounter if the games work at all, and what sort of functioning you lot can expect. The good news: Every game was able to run! Or, at least, they ran on current GPUs. Spoiler alarm: Intel'due south Hd 4600 and older integrated graphics don't have DX12 or Vulkan drivers, which eliminated several games from our list.

We benchmarked Intel's current desktop GPU (UHD Graphics 630) along with an older i7-4770K (HD Graphics 4600) and compared them with AMD's electric current competing desktop APUs (Vega 8 and Vega 11). For this update, we too have results from Ice Lake's Gen11 Graphics, but that's but for mobile solutions, so information technology'south in a different category. We're still working to become a Renoir processor (AMD Ryzen seven 4800U) in for comparison, forth with desktop Renoir when that launches.

Nosotros've also included performance from a upkeep dedicated GPU, the GTX 1050. The GTX 1050 is past no means one of the fastest GPUs right now, though you can endeavour picking up a used model off eBay for around $100. (Annotation: don't get the 'fake' Cathay models, as they likely aren't using an actual GTX 1050 chip!) And before y'all ask, no, we didn't have a previous-gen AMD A10 (or earlier) APU for comparison.

Test Hardware

Because we're looking at multiple different integrated graphics solutions, our test hardware needed three different platforms. Nosotros've used more often than not loftier-finish parts, including better-than-boilerplate retentiveness and storage, just the systems are not (and couldn't be) identical in all respects. 1 particular issue is that we needed motherboards with video output support on the rear IO panel, which restricted options. Here are the testbeds and specs.

| Intel UHD Graphics 630 | AMD Vega 11/8 Graphics | Intel Hard disk drive Graphics 4600 | Intel Iris Plus Graphics (25W) | Intel Iris Plus Graphics (15W) |

|---|---|---|---|---|

| Cadre i7-9700K | Ryzen 5 3400G, 2400G | Core i7-4770K | Core i7-1065G7 | Core i7-1065G7 |

| Gigabyte Z370 Aorus Gaming 7 | Ryzen 3 3200G | Gigabyte Z97X-SOC Strength | Razer Bract Stealth 13 | HP Envy 17t (15W) |

| 2x16GB Corsair DDR4-3200 CL16 | MSI MPG X570 Gaming Border WiFi | 2x8GB G.Skill DDR3-1600 CL9 | 2x8GB LPDDR4X-3733 | DDR4-3200 |

| XPG 8200 Pro 2TB Chiliad.2 | 2x8GB 1000.Skill DDR4-3200 CL14 | Samsung 860 Pro 1TB | 256GB NVMe SSD | 512GB NVMe SSD |

| Corsair MP600 2TB M.2 |

Nosotros equipped the AMD Ryzen platform with 2x8GB DDR4-3200 CL14 memory because our normal 2x16GB CL16 kit proved troublesome for some reason. Information technology shouldn't matter much, as none of the tests do good from large amounts of RAM (preferring throughput instead), and the tighter timings may even give a very slight heave to performance. The older HD 4600 setup used the merely uniform motherboard I withal have around, and the simply DDR3 kit as well—but both were previously high-terminate options.

The Razer and HP laptops for Intel'southward 10th Gen Core i7-1065G7 Iris Plus Graphics are better than previous GPUs, with the Razer running LPDDR4X-3733 memory, while the HP uses DDR4-3200 retention. That gives Razer (and Gen11 Graphics) a slight advantage that may account for some of the departure in performance, though the higher 25W TDP when using the performance profile appears to be the biggest gene based on our testing.

The storage as well varied based on what was available (and I didn't want to reuse the aforementioned drive, as that would entail wiping information technology between system tests). It shouldn't be a factor for these gaming and graphics tests, though testing large games off the Razer's 256GB (minus the OS) storage was a pain in the rear. How can 256GB be the baseline on a $1,700 gaming laptop in 2020?

Performance of AMD vs Intel Integrated Graphics

Let'due south get cut direct to the heart of the affair. If you want to run mod games at pocket-sized settings like 1080p medium, none of these integrated graphics solutions volition suffice—at least, non across all games. At 1080p and medium settings, AMD's Vega 11 in the 3400G averaged 27 fps across the nine games, with only two games (Forza Horizon 4 and Strange Brigade) breaking the 30 fps mark—and Metro Exodus failed to hit 20 fps.

1080p medium actually looks quite decent, not far off what you'd go from an Xbox Ane or PlayStation 4 (though not the newer One Ten or PS4 Pro). Dropping the resolution or tuning the quality should make most other games playable, only we opted to do both, running at 1280x720 and minimum quality settings. Nosotros've also tested 3DMark Burn down Strike and Time Spy for synthetic graphics functioning.

Starting with the large-moving-picture show overall view of gaming performance, you lot might be surprised to run across the GTX 1050 basically doubling the performance of the fastest integrated graphics solution. Yup, it's a pretty large gap, helped by the faster desktop CPU too (you lot can see that functioning drops a flake with a Ryzen v 3400G). Interestingly, a few games like Reddish Dead Redemption 2 shut the gap as the shared system RAM helps. GTX 1050 has over double the bandwidth (112 GBps dedicated vs. 51.2 GBps shared), but merely for 2GB of memory. Still, even the slowest dedicated GPU we might consider recommending ends upwards being far better than integrated graphics.

And that's but when we're looking at AMD's current offerings. The chasm betwixt Vega 8 and Intel's UHD Graphics 630 is even larger than the gap between Vega xi and the GTX 1050. AMD'due south Ryzen three 3200G with Vega viii Graphics is 2.iii times faster than Intel's Core i7-9700K and UHD Graphics 630. Other current-generation Intel chips can further reduce performance by limiting clocks or disabling some of the GPU processing clusters—we didn't test annihilation with UHD 610, for instance.

Intel's latest Iris Plus solution in the 10th Gen Ice Lake processor does amend, sort of. When constrained to 15W TDP for the entire bit, it's only xv% faster than UHD 630. Boosting the TDP to 25W improves performance another 35%, however—55% faster than the desktop UHD 630, even though the desktop notwithstanding has an additional 70W of TDP available.

Even the fastest Iris Plus Graphics notwithstanding can't catch up to a desktop Ryzen 3 3200G and Vega 7 Graphics. Part of that is the college TDP, but a bigger factor is that AMD's GPU architecture is simply superior. Intel is hoping to gear up that with Xe Graphics, and Tiger Lake looks reasonably promising. Intel just showed off TGL (TGL-U, mayhap?) running Battleground V at 1080p high settings and around xxx-33 fps. I ran that same test on 15W ICL-U and got merely ten-13 fps, and 17-21 fps at 25W, with the caveat that nosotros don't know the TDP of the Tiger Lake chip.

Compared to its current desktop graphics solutions, Intel has a lot of ground to brand up. Tripling the functioning of UHD 630 would become Intel to competitive levels, which isn't actually out of the question. Ice Lake and Gen11 Graphics are already 55% faster than UHD Graphics when equipped with a 25W TDP, and Tiger Lake with Xe Graphics could potentially deliver 75% (or more than) performance than Gen11. Combined, that would exist two.75X the functioning of UHD 630, which would attain adequate levels of functioning in many games. We'll have to await and conduct our own testing of Tiger Lake to meet how Xe Graphics performs, just early indications are that it might not be also shabby.

If you lot're wondering about even older Intel GPUs, the Hd 4600 is roughly one-half as fast as UHD 630. Okay, technically information technology'south only 42% slower, but it did fail to run four of the nine games. Three of those require either DX12 or Vulkan, while Metro Exodus supports DX11 and tried to run … but information technology kept locking up a few seconds into the benchmark. Below are the full suite of benchmarks.

Borderlands 3 supports DX12 and DX11 rendering modes, with the latter performing improve on the Intel and Nvidia GPUs. AMD meanwhile gets a modest benefit from DX12, fifty-fifty on its integrated graphics, so Vega 11 is 'only' 38% slower than the GTX 1050, compared to the overall 43% arrears. Meanwhile, Intel falls further backside Vega eight than in the overall metric, abaft by 70% here compared to 57% overall. Even at minimum settings and 720p, UHD 630 can't muster a playable experience.

The Sectionalisation 2 performance shows the trend we'll run across in a lot of the more demanding games. Fifty-fifty at minimum quality (other than resolution scaling), Intel's UHD 630 isn't really playable. You could struggle through the game at 20 fps, merely information technology wouldn't be very fun and dips into the depression-to-mid teens occur oft. AMD meanwhile averages more than 60 fps on the 3400G and comes close with the 3200G. Framerates aren't consistent, however, with odd behavior on the unlike AMD APUs. Specifically, the 99th percentile fps decreased as average fps increased.

Far Weep 5 relative performance is almost an exact repeat of The Partitioning 2: Vega viii is i.viii times faster than UHD 630, and the 1050 is well-nigh 60% faster than the 3400G. Boilerplate framerates are lower, however, and so even at minimum quality, you won't become 60 fps from whatever of the integrated graphics solutions nosotros tested. Hopefully Renoir—and perchance Xe Graphics—volition change that later this year.

Concluding Fantasy 14 is the closest Intel comes to AMD's functioning, trailing by just 28%. A lot of that has to exercise with the game existence far less enervating, especially at lower quality settings, and even Intel manages a playable 47 fps. For that thing, even the old Intel Hard disk drive 4600 is somewhat playable at 27 fps. Meanwhile, the GTX 1050 has its largest lead over Vega 11, with 187 fps and 180% higher framerates. This is one of those cases where GPU memory bandwidth likely plays a bigger role, as all three AMD APUs cluster together at the 66-67 fps mark.

Shifting gears to Forza Horizon 4, we come up to the beginning game that simply won't run on older Intel GPUs. It's a Windows 10 universal app and requires DX12, so you lot need at least a Broadwell (fifth Gen) Intel CPU. Otherwise, performance is quite adept on the AMD solutions, with the best result of the games we tested. The 3400G breaks 100 fps and even keeps minimums above lx, and the GTX 1050 is only 35% faster than the 3400G'due south Vega 11 Graphics. Intel'south UHD 630 is back in the dumps, with the 3200G beating it by 175%, though it does manage a playable 31 fps—not silky smooth, but information technology should suffice in a compression.

Metro Exodus ends up beingness one of the better showings for Intel's UHD 630, as the 3200G is 'only' 88% faster. This is another game where GPU retention bandwidth tends to be a bigger bottleneck, and you withal tin can't get 30 fps with Intel. The HD 4600 would too launch the game and run for maybe ten-15 seconds before locking upwards, but not at acceptable framerates—we've omitted it from the results because it couldn't complete the benchmark. The GTX 1050 is back to a comfortable 75% lead over the closest APU.

Blood-red Expressionless Redemption 2 is the most demanding game we tested, with performance of only 46 fps on the 3400G Vega 11, even at 720p and minimum quality. It'southward still playable at least, though not on Intel'due south UHD 630 where framerates are in the low-to-mid teens. Nosotros tested with the Vulkan API, and like Forza, HD 4600 can't even effort to run the game. The 3200G with Vega 8 notches up another big atomic number 82 of 165% over UHD 630, while the GTX 1050 has another relatively shut result with the defended GPU leading the 3400G and Vega 11 by only 45%.

Shadow of the Tomb Raider is back to business concern as usual and comes closest to matching our overall average results. The GTX 1050 leads 3400G's Vega 11 by 74%, while the 3200G's Vega 8 is 150% faster than UHD 630. All of the AMD APUs manage a very playable 50 fps or more, with minimums above 30 fps. Intel, on the other hand, needs to double its UHD 630 performance to hit an adequate level, which it theoretically does with something like the Core i7-1065G7, but I'm nonetheless working on getting one of those to run my ain tests.

Final, we accept Strange Brigade, which, like RDR2, only supports the DX12 and Vulkan APIs. That knocks out Hard disk drive 4600, simply the remaining GPUs can all run it at acceptable levels—and even smooth levels of 60+ fps on the AMD APUs. This is ane of just three games we tested where 60 fps on AMD's integrated graphics is possible, and not coincidentally likewise ane of the three games where Intel'south UHD 630 breaks thirty fps.

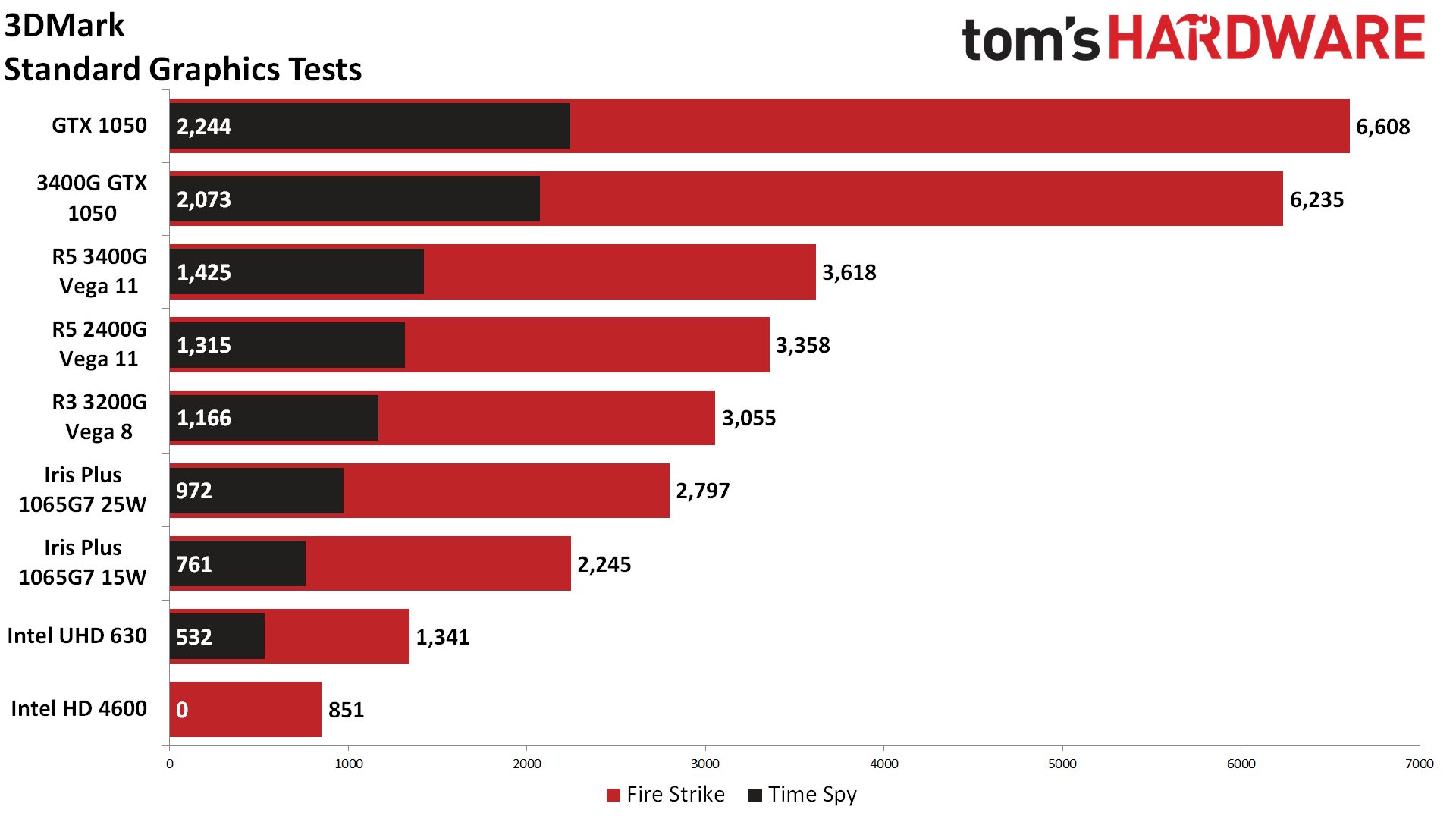

Nosotros also wanted to await at synthetic graphics functioning, as measured by 3DMark Burn Strike and Time Spy. Time Spy required DX12, so again the HD 4600 can't run it, merely the rest of the GPUs could. In the past, 3DMark has been accused of beingness overly kind to Intel—or perchance it's that Intel has put more effort into optimizing its drivers for 3DMark. But taking the overall gaming performance we showed earlier, the 3400G Vega 11 was 168% faster than the 9700K UHD 630. In Fire Strike, the issue was nearly identical: Vega xi leads by 170%. Time Spy besides matched up perfectly, showing Vega 11 with a 168% lead.

Which isn't to say that 3DMark is the just testing needed. Looking at the GTX 1050 GPU shows at least one point of contention. In our overall metric, the GTX 1050 was 72% faster than the 3400G Vega 11. In Fire Strike, the 1050 is 83% faster—slightly more favorable to the dedicated GPU. Time Spy, on the other hand, drops the lead to simply 57%, a massive swing, and that was only with multiple runs. One run even had a score that was lower than the 3400G.

Part of that is going to be DirectX 12, but Time Spy is too a newer 'forward-looking' benchmark that taxes the 1050'southward limited 2GB of VRAM. The benchmark tells you as much ("Your hardware may not be compatible"), and it skews the results. Information technology'south non wrong every bit such, only it's important to not simply take such results at face up value.

With this round of testing out of the way—and a large role of why we wanted to do this was to prepare for the incoming Xe Graphics and AMD Renoir launches—inevitably, people wonder why the gulf between iGPU and dGPU (integrated and discrete GPUs) remains so large. Have one look at the PlayStation v and Xbox Series 10 specs, and it's clearly possible to make something much faster. So why hasn't this happened?

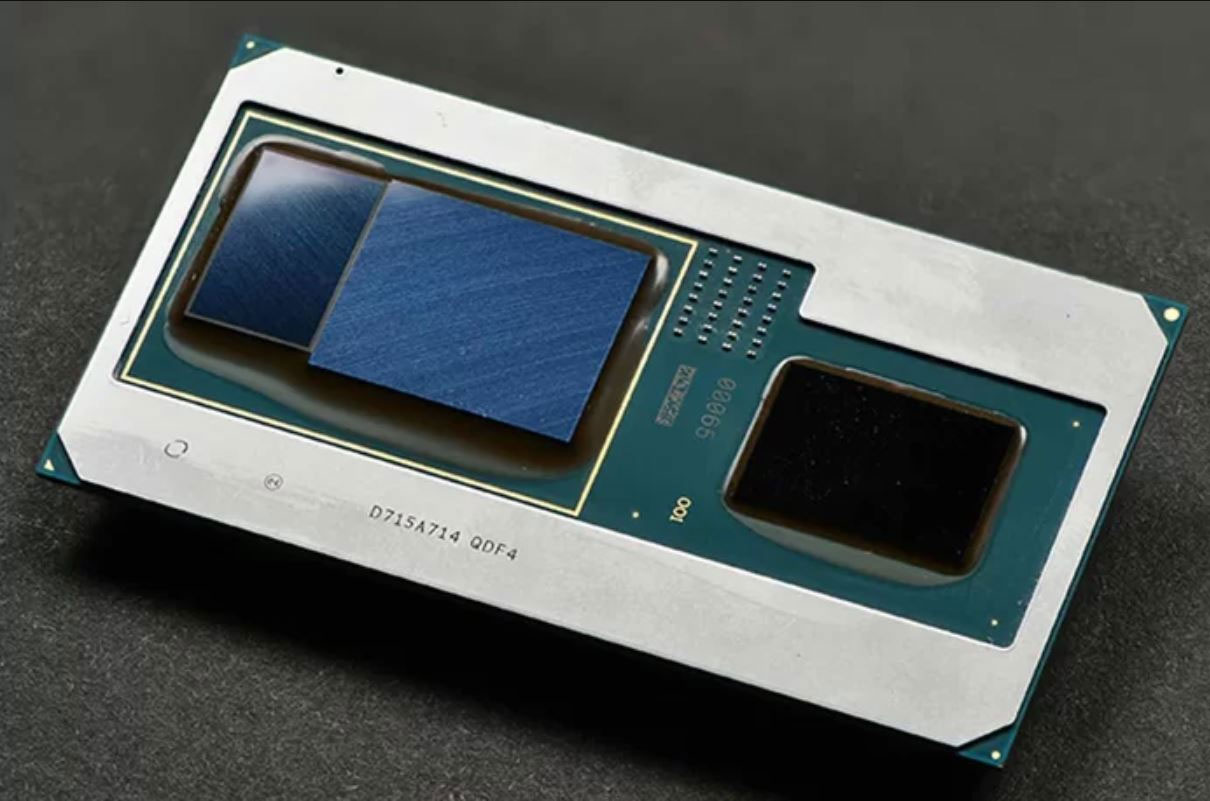

First, information technology'south non entirely right to say that this hasn't happened in the PC space. Intel shocked a lot of us when it appear Kaby Lake G in 2018, which combined a four-core/8-thread Kaby Lake (7th Gen) CPU with a custom AMD Vega M GPU, and tacked on 4GB of HBM2 for adept measure. We looked at how the Intel NUC with a Cadre i7-8809G performed at the time. In short, performance was decent, nearly matching an RX 570—which is significantly faster than anything we've included hither. There were only a few problems.

First, Kaby Lake Grand was incredibly expensive for the level of operation information technology delivered. Intel likes its profit margins, and the cost of the Intel CPU, AMD GPU, and HBM2 retention was not going to exist inexpensive. AMD sells the Ryzen five 3400G for $150 (opens in new tab), but the only fashion to get the Core i7-8809G was either with an expensive NUC or an expensive laptop.

2nd, and perhaps more critically, support was a joke. Intel initially said it would "regularly" update the graphics drivers for the Vega M GPU, but that didn't actually happen. There was a gap of nearly 12 months where no new drivers were made available. Then Intel finally passed the cadet to AMD and said users should download AMD'south drivers, and less than 2 months later, AMD removed Vega M from its list of drivers.

Merely the biggest consequence is that college-performance integrated graphics is a express marketplace. Information technology makes sense for a laptop where tighter integration can reduce the size and complexity, plus you tin get improve ability balancing between the CPU and GPU. For the desktop, though, you lot're better off getting a dedicated CPU and a dedicated GPU, which you can and so upgrade separately.

That'southward not the way the panel world works, and when Sony or Microsoft are willing to foot the bill for custom silicon, impressive things can be washed. The current PS4 Pro and Xbox One X pack 36 CUs and 40 CUs, respectively. That's three to four times as many GPU clusters as the Ryzen five 3400G's Vega 11 Graphics (the xi comes from the number of CUs). The PS5 will stick with 36 CUs, merely they'll be AMD'south new RDNA 2 architecture, which potentially boosts performance per watt by over 100% compared to the older GCN compages. Xbox Series X will boot that number upwardly to 52 CUs, again with the RDNA two compages.

More than chiefly for consoles, they can integrate a bunch of high-performance memory—GDDR5 for the electric current stuff and GDDR6 for the upcoming consoles. They don't demand to worry virtually users wanting to upgrade the RAM, which makes it possible to use higher operation GDDR5/GDDR6 memory. The Xbox Series X volition include 16GB of xiv Gbps GDDR6 running on a 512-chip retention interface, giving 896 GBps of full bandwidth. Even with 'overclocked' DDR4-3200 arrangement memory running in a dual-channel configuration, traditional PC integrated graphics solutions share the resulting 51.ii GBps bandwidth with the CPU. That's a huge bottleneck.

We can see this in the performance difference between AMD's Vega viii and Vega 11 Graphics in the to a higher place charts. Vega 8 runs at 1250 MHz and has a theoretical 1280 GFLOPS of compute performance, while Vega 11 runs at 1400 MHz and has 1971 GFLOPS of compute. In theory, Vega xi should exist 54% faster than Vega 8. In our testing, the largest lead was 22% (Forza Horizon 4), the smallest was 2% (Terminal Fantasy XIV), and on average, the difference was 12%. The reason for that is mostly the memory bandwidth bottleneck.

Another example is the GTX 1050, which has a theoretical 1,862 GFLOPS of operation. AMD and Nvidia GPUs aren't the same, notwithstanding, and Nvidia usually gets well-nigh ten% more effective performance per GFLOPS. We see this, for instance, with the GTX 1070 Ti with 8,186 GFLOPS, which ends upwardly performing around the same level as a Vega 56 with ten,544 GFLOPS. Except the 1070 Ti actually runs at closer to 1.85 GHz, then basically 9,000 GFLOPS. Still, AMD needed about 15% more GFLOPS to friction match Nvidia with the previous architectures. And then why does the GTX 1050 end up performing 72% faster? Simple: It has over twice the memory bandwidth.

If AMD or Intel wants to create a true high-performance integrated graphics solution, information technology will demand a lot more memory bandwidth. Nosotros already see some of this in Ice Lake, with official back up for LPDDR4-3733 memory (59.seven GBps compared to just 38.4 GBps for Coffee Lake U-serial with DDR4-2400 retention). Simply integrated graphics should benefit from memory bandwidth upwards to and beyond 100 GBps. DDR5-6400 volition basically double the bandwidth of DDR4-3200 retentivity, but nosotros won't see support for DDR5 in PCs until 2021.

As a potentially interesting aside, initial leaks of Intel's future Rocket Lake CPUs, which may accept Xe Graphics, surfaced recently. Information technology'due south only 3DMark Fire Strike and Fourth dimension Spy, only the numbers aren't peculiarly impressive. The Time Spy result is only 14% college than UHD 630, while Burn down Strike is 30% faster. And, equally noted earlier, 3DMark scores can be kinder to Intel's GPUs than actual gaming tests. Still, we don't know the Rocket Lake GPU configuration—as a desktop chip, Intel might over again be castrating performance. Mobile Coffee Lake for instance is available with up to twice equally many Execution Units (EUs) as desktop Coffee Lake, even though information technology more often than not ends up TDP limited. We'll accept to wait and see what Rocket Lake really brings to the table in late 2020 or early 2021.

Another alternative, which Intel already has tried, is various forms of dedicated GPU caching or dedicated VRAM. Before versions of Iris Pro Graphics included up to a 128MB eDRAM enshroud, and Kaby Lake G included a 4GB HBM2 stack. Both helped alleviate the need for lots of system memory bandwidth, merely they of course price actress. Pairing 'cheap' integrated graphics with 'expensive' integrated VRAM sort of defeats the purpose. But that may change. Intel has 3D chip stacking applied science that could reduce the cost and footprint of defended GPU VRAM. It's called Foveros, and that's something nosotros're looking frontward to testing in future products.

AMD meanwhile didn't change much with its Renoir integrated graphics. Clock speeds are upward to 350 MHz higher than the 3400G, but it'due south at present a Vega 8 GPU. That's likely because AMD knows stuffing in more GPU cores without boosting memory bandwidth is more often than not an exercise in futility. The hope is that futurity Zen 3 APUs will include Navi 2x GPUs and potentially address the bandwidth issue, but don't agree your breath for that. Plus, Zen 3 APUs are likely however a yr or more than off.

In short, there are ways to brand integrated graphics faster and better, merely they cost money. For desktop users, information technology volition remain far easier to but buy a decent dedicated graphics carte. Meanwhile, laptop and smartphone makers are working to amend functioning per watt, and some promising technologies are coming. In the meantime, PC integrated graphics solutions are going to remain a bottleneck, and fifty-fifty more memory bandwidth likely won't alter things.

After all, Intel ships more GPUs than AMD and Nvidia combined, and even so it's now planning to enter the discrete GPU marketplace. Intel may still expect at HBM2 or stacked chips to add dedicated RAM for future integrated GPUs, merely we don't await those to be desktop solutions.

Source: https://www.tomshardware.com/features/amd-vs-intel-integrated-graphics

0 Response to "Intel Hd Graphics Vs Amd Radeon"

Post a Comment